Unlocking the Secrets of Aging Blood Cells: Researchers Discover Novel Role of MLKL in Stem Cell Decline

As individuals age, the body’s intricate biological systems undergo a gradual decline, and perhaps none are as fundamental to our health and resilience as the blood and immune systems. At the core of this decline lies the diminished capacity of hematopoietic stem cells (HSCs), the remarkable progenitors responsible for generating every type of blood cell. While healthy HSCs possess an innate ability to self-renew and maintain a precise balance of blood cell populations, their efficacy wanes with time. This age-related compromise manifests as a reduced output of new cells, a skewed preference for certain cell lineages, such as myeloid cells, over others, like lymphoid cells, and a consequently weakened ability to mount robust immune responses. Understanding the mechanisms driving this deterioration is paramount to developing interventions that can bolster health and vitality in later life.

The Multifaceted Assault on Hematopoietic Stem Cells

The aging process is not a singular event but rather a complex interplay of accumulating cellular insults. Scientists have identified several key contributors to the decline in HSC function, including the insidious accumulation of DNA damage, subtle yet significant alterations in gene expression patterns, a persistent state of low-grade chronic inflammation, and dynamic shifts within the bone marrow microenvironment – the specialized niche where HSCs reside and mature. Despite considerable progress, a comprehensive understanding of how these diverse stressors converge to impair HSC performance has remained elusive, presenting a significant challenge in the quest to combat age-related maladies.

Investigating a Crucial Aging Pathway: The RIPK3-MLKL Axis

To unravel this complex puzzle, a collaborative effort between researchers at The University of Tokyo, Japan, and St. Jude Children’s Research Hospital, USA, embarked on a detailed investigation into how age-related stressors impact HSCs. Their focus converged on the receptor-interacting protein kinase 3 (RIPK3)-mixed lineage kinase like (MLKL) signaling axis. This pathway is primarily recognized for its critical role in necroptosis, a highly regulated form of programmed cell death that serves as a crucial defense mechanism against certain pathogens and cellular damage. However, the researchers harbored a suspicion that this pathway might extend its influence beyond mere cell demise.

The groundbreaking study was spearheaded by Dr. Masayuki Yamashita, who, at the time of the research, held a position as an Assistant Professor at The Institute of Medical Science, The University of Tokyo, and is now an Assistant Member at St. Jude Children’s Research Hospital. He was joined by esteemed colleagues, including Dr. Atsushi Iwama from The Institute of Medical Science, The University of Tokyo, and Dr. Yuta Yamada from St. Jude Children’s Research Hospital, who was a graduate student at The Institute of Medical Science, The University of Tokyo, during the study. Their combined expertise and dedication laid the foundation for a discovery that could significantly alter our understanding of stem cell biology and aging.

A Surprising Revelation: MLKL’s Non-Lethal Role in Stem Cell Aging

The initial spark for this research came from an unexpected observation made by Dr. Yamashita and his team. "We discovered an unexpected phenotype in HSCs of MLKL-knockout mice repeatedly treated with 5-fluorouracil, where aging-associated functional changes were markedly attenuated despite no detectable difference in HSC death, prompting us to investigate whether this pathway might induce functional changes beyond cell death," Dr. Yamashita recounted. This observation was pivotal: it suggested that MLKL could influence the aging process of stem cells without actually triggering their demise. This hypothesis became the central tenet of their investigation, culminating in a significant publication in the prestigious journal Nature Communications on April 6, 2026, in Volume 17.

This discovery challenged conventional wisdom surrounding necroptosis and its molecular effectors. Historically, the RIPK3-MLKL pathway was viewed primarily through the lens of cell death. However, the data from these experiments strongly indicated that MLKL might possess a dual functionality, capable of orchestrating cellular senescence and functional decline through mechanisms independent of outright cell lysis. This opened up an entirely new avenue of inquiry into the nuanced ways cellular pathways can contribute to the aging phenotype.

Delving Deeper: Experimental Design and Methodologies

To rigorously test their hypothesis that MLKL could impair HSC function without causing cell death, the researchers meticulously designed a series of experiments utilizing a range of genetically modified mouse models. They employed wild-type mice as a baseline, alongside MLKL-deficient and RIPK3-deficient models to assess the specific contributions of these proteins. Further enhancing their investigative toolkit, they incorporated specialized reporter mice engineered with a Förster resonance energy transfer (FRET)-based biosensor. This innovative technology allowed for the real-time detection and visualization of MLKL activation within living cells, providing unprecedented insight into its dynamic behavior.

The experimental paradigm involved exposing these mice to various stress conditions designed to mimic the cumulative insults experienced during aging. These stressors included inducing chronic inflammation, replication stress (which occurs when cells attempt to divide with damaged DNA), and oncogenic stress (mimicking the cellular conditions that can lead to cancer). The critical assessment of HSC function was primarily conducted through bone marrow transplantation assays. This established technique involves transplanting HSCs into recipient mice that have been conditioned to accept new stem cells, thereby allowing researchers to evaluate the regenerative capacity and long-term engraftment potential of the transplanted HSCs. A successful transplantation, indicated by the restoration of blood cell production in the recipient, is a direct measure of stem cell health and functionality.

Complementing the transplantation assays, the research team employed an array of sophisticated molecular and cellular biology techniques to gain a comprehensive, multi-level understanding of MLKL’s impact. These included flow cytometry for detailed cell population analysis, ex vivo expansion to assess stem cell proliferation potential in controlled laboratory settings, RNA sequencing (RNA-seq) to map gene expression profiles, assay for transposase-accessible chromatin sequencing (ATAC-seq) to understand DNA accessibility and regulatory element activity, high-resolution imaging for visualizing cellular structures, metabolic profiling to assess cellular energy production, and detailed studies of mitochondrial function. The synergistic application of these advanced methodologies enabled the researchers to dissect the intricate molecular and structural changes induced by MLKL activation in HSCs.

Mitochondrial Damage: The Unseen Consequence of MLKL Activation

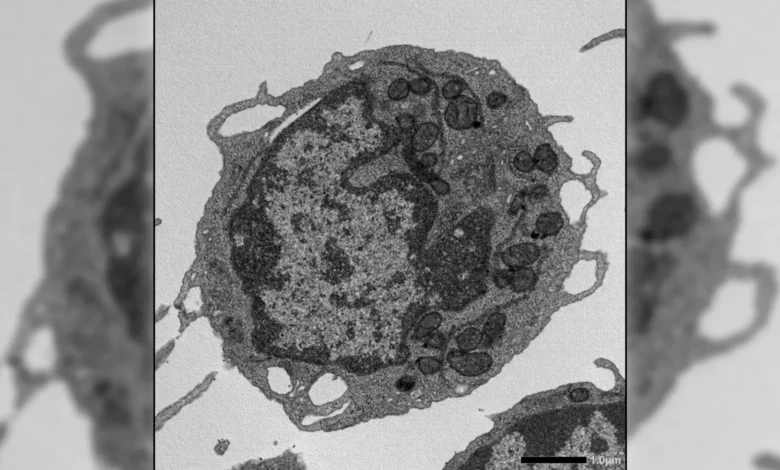

The results of their comprehensive investigation yielded a remarkable and previously unrecognized role for MLKL in the aging of stem cells. Contrary to its established role in necroptosis, the activation of MLKL within HSCs did not lead to an increase in cell death or a reduction in the overall number of stem cells. Instead, MLKL exerted its influence through a more subtle, yet profoundly detrimental, mechanism.

Upon activation under conditions of cellular stress, MLKL was observed to transiently translocate to the mitochondria – the powerhouses of the cell responsible for generating adenosine triphosphate (ATP), the primary energy currency. Once at the mitochondria, MLKL initiated a cascade of damage. It was found to disrupt the mitochondrial membrane potential, a critical electrochemical gradient essential for efficient energy production. Furthermore, it altered the structural integrity of mitochondria and significantly impaired their ability to generate energy. These mitochondrial dysfunctions were directly linked to the hallmark features of HSC aging: a diminished capacity for self-renewal, a reduced output of essential lymphoid cells, and a pronounced bias towards the overproduction of myeloid cells. This discovery shifted the paradigm, highlighting that cellular damage, rather than cell death, could be a primary driver of aging in these critical cells.

Preserving Youthful Function: The Protective Effects of MLKL Inhibition

The protective effects of manipulating MLKL activity became strikingly apparent when the researchers observed the outcomes in its absence. In instances where MLKL was genetically removed or pharmacologically inactivated, many of the age-related functional deficits in HSCs were significantly ameliorated. HSCs lacking MLKL demonstrated a robust ability to regenerate the blood system, produced healthier and more diverse immune cells, exhibited reduced levels of DNA damage, and maintained superior mitochondrial function. These beneficial effects were evident even in aged animals and under conditions of significant cellular stress, underscoring the broad impact of MLKL on stem cell resilience.

Intriguingly, these improvements in stem cell function occurred without substantial alterations in global gene expression or chromatin accessibility. This observation suggested that MLKL’s influence on aging operates at a post-transcriptional level, primarily affecting cellular structures and metabolic processes rather than directly modulating DNA regulation or inflammatory signaling pathways. This finding is particularly significant, as it implies that interventions targeting MLKL might offer a way to improve stem cell function by addressing the functional consequences of stress, rather than attempting to reverse complex genetic or epigenetic changes.

Implications for Aging and the Future of Regenerative Therapies

The implications of this research extend far beyond a fundamental understanding of stem cell aging. By identifying MLKL as a critical nexus connecting various forms of cellular stress to mitochondrial dysfunction and subsequent stem cell aging, the study illuminates a common pathway that underpins age-related decline in the hematopoietic system. This discovery offers a novel therapeutic target and a fresh perspective on how to approach age-related diseases affecting blood and immunity.

Dr. Yamashita expressed optimism about the long-term potential of these findings. "In the longer term, this research could lead to therapies that preserve the function of hematopoietic stem cells, ultimately improving recovery and long-term health for patients undergoing chemotherapy, radiation, or transplantation," he stated. "By revealing how non-lethal activation of cell-death pathways drives stem cell aging, these findings may inspire new classes of mitochondrial-protective or necroptosis-modulating drugs." The potential to enhance the resilience of stem cells in patients undergoing intensive medical treatments, such as those for cancer, could revolutionize recovery outcomes and significantly improve their quality of life.

A Paradigm Shift in Stem Cell Aging Research

In summary, this seminal study has unveiled a critical and previously unrecognized role for MLKL in the aging of hematopoietic stem cells. It demonstrates that MLKL, while a key component of the necroptosis pathway, can also contribute to stem cell aging through non-lethal mechanisms that directly impair mitochondrial function. This discovery challenges the traditional, death-centric view of necroptosis-related proteins and opens exciting new avenues for therapeutic intervention. By understanding how stress, mediated by MLKL, leads to mitochondrial damage and weakens HSC function over time, scientists are now better equipped to develop strategies aimed at slowing or preventing the age-related decline of the blood and immune systems. This work not only advances our fundamental knowledge of aging but also paves the way for innovative treatments that could enhance healthspan and improve the lives of millions. The meticulous research conducted by Dr. Yamashita and his team represents a significant leap forward in our quest to maintain youthful vitality and robust health throughout the human lifespan.